Email Marketing Metrics You Should Track to Make Smarter Campaign Decisions

Track the most important email marketing metrics to measure performance, optimize campaigns, and make smarter, data-driven decisions.

Shahin Alam

In all my time working with email, I have come across a certain phenomenon numerous times. It starts with you sending a campaign.

You give it a few hours, then open the dashboard. Rows of percentages stare back at you. Some look fine, while others feel disappointing.

A few may even feel confusing. You know that you should be learning something from all of this, but instead you are left wondering which numbers actually mean something and deserve your attention, and which ones are just noise.

This moment is familiar to anyone who works with email regularly. Reports keep getting more and more detailed by the day, yet clarity remains just as hard to find.

Somewhere between opens, clicks, and revenue, the real story gets lost. That is usually the cue for seeking guidance on email marketing metrics you should track. While you may hope for a clean list that will solve the problem, it rarely is the case.

The issue is not a lack of data. You can work with the data you have. Instead, the difficulties lie in knowing how to look at it without chasing the wrong signals.

Many teams end up optimizing for numbers that feel important, but they do not change decisions. Others ignore early warning signs because they seem minor at first.

Before getting into specific metrics that make email marketing a success, take a minute to slow down and reset your expectations.

The goal here is not to track everything, but to understand which metrics actually help you make better campaign choices, and which ones distract you from your email goals.

Key Takeaways

- Most email reports feel overwhelming because they mix useful signals with distracting ones. Experienced marketers don’t chase every number; they look for patterns that explain email behavior.

- A metric only matters if it helps you make a decision. If a number looks impressive but does not change how you plan the next send, it is probably getting more attention than it deserves.

- Performance shifts don’t always have to be dramatic. Instead, small and consistent changes can help you learn more about audience intent than sudden spikes that disappear after one campaign.

- Design, timing, and content choices affect results. The people who improve fastest pay close attention to how these elements influence engagement rather than blaming the list or the offer.

- Clarity comes from context, not dashboards. When you understand why something moved, you are better equipped to make smarter campaign decisions.

Email Marketing Metrics You Should Track Are Often Misunderstood

Most teams do not ignore metrics. In fact, they watch them closely. Yet, the problems still remain. This is because teams approach and interpret metrics wrong once they appear on the screen.

I have lost count of how many times a campaign was labeled a success because one percentage looked good, while everything else pointed in the opposite direction. The moment you see open rates go up, a sense of relief and relaxation sets in.

But at the same time, clicks may dip, and revenue may lose momentum. All of these get explained away quickly, so that the report continues to feel positive.

Like a win.

It happens because it is easy to track numbers and percentages, but what truly matters is understanding what they are really saying.

A lot of this comes from habit. It is common practice for marketers to inherit dashboards from previous teams or copy what others are measuring, without ever questioning why.

Over time, the numbers become familiar, to the point that they even feel comforting, despite the fact that no one can clearly explain how they relate to and influence the next decision.

When that happens, metrics lose their value. They turn into decoration instead of direction.

There is also a tendency to treat every number as equal, when the truth is that they are not. Some metrics deserve attention early, while others only matter when viewed over a longer stretch.

According to industry data, email continues to deliver an average return of as much as thirty six dollars for every dollar spent. This kind of performance does not come from obsessing over numbers at a surface level. It comes from understanding what actually moves the needle towards the outcome you want.

When teams focus on the wrong signals, patterns tend to repeat.

- Teams make campaign changes after single sends instead of over time

- Design and content decisions get blamed

- Teams chase after short term wins that quickly disappear

Metrics are meant to help. They are not misleading on their own, but become so when teams start treating them as final answers instead of clues.

Once you start reading them as part of a broader story, they stop feeling confusing, and start earning their place in the reporting process.

The Engagement Metrics That Show How Readers Interact With Your Emails

Many teams take a glance at the surface-level metrics and call it a day. That is the wrong approach. Instead, look past the numbers at the surface, and you will find engagement metrics that start to reveal more.

At first, the numbers may be unpredictable. They will behave differently from one send to the next. Naturally, this may seem confusing, but that is what teams should look into. The scattered numbers reflect the reader’s mood, timing, relevance, and sometimes plain curiosity.

Looking at them in isolation rarely helps. However, when you watch how they move together over time, the insights start to appear.

Open rate matters, but not in isolation

Email open rates have declined in recent years, and not without reason. Privacy changes have made things more complex than ever before, and some marketers wrote it off completely.

In practice, though, it still tells you something useful when you study it carefully.

In real campaigns, open rates usually fall in a range rather than settle on a fixed target. For many lists, that range varies up to the low thirties.

When the rates drift outside that familiar zone, it usually signals a shift in expectations, timing, or trust. What it does not tell you, however, is whether or not the email delivered on its promise.

Sure, subject lines and preview text play a role, but so does consistency. When readers open an email and see a different layout or unfamiliar branding, they hesitate.

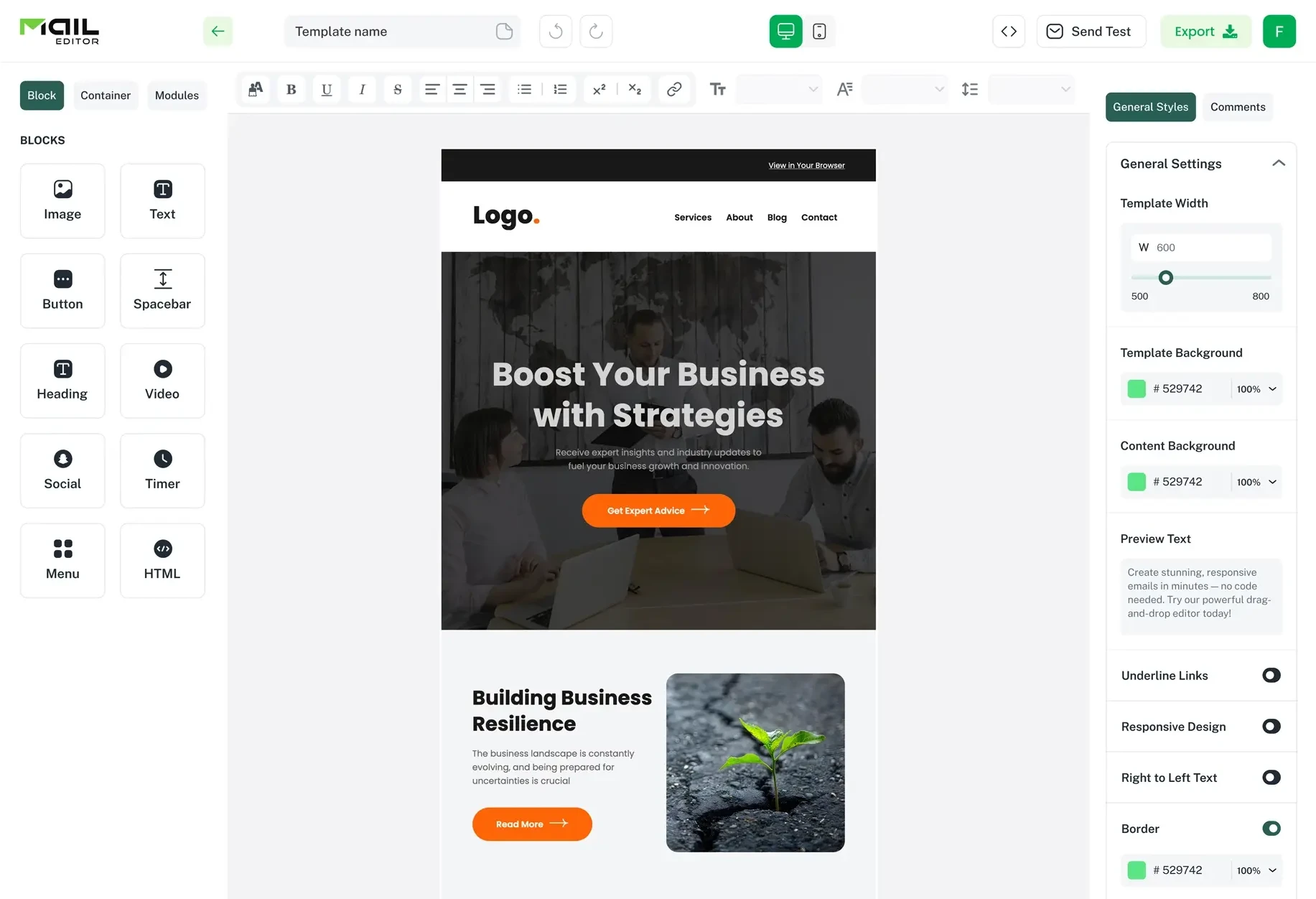

On the other hand, teams that reuse well-structured templates often see more consistent opens. This is simply down to the fact that the email feels more recognizable to the reader. This is where tools like MailEditor excel. It helps you keep layouts consistent across campaigns without slowing down your output.

Click through rate signals intent, not success

This is another common misconception. Clicks are often treated as commitment by the reader, when in reality, they usually mean interest.

In many campaigns, click through rates hover in the low single digits, and that is normal. Spikes happen, but they rarely repeat without a reason.

What matters is where those clicks land. Dense layouts, buried links, or crowded sections dilute intent and can overwhelm the reader.

Instead, designing emails with clear spacing and a logical flow makes it easier for readers to act. When clicks drop after a design refresh, it is often the structure, not the message, that needs another look.

Click to open rate and why it reveals content alignment

The click to open rate removes delivery noise and asks a simpler question. Did the content match the expectation set at the start?

Healthy ranges vary widely, but many marketers see meaningful signals once this metric moves beyond the low teens.

When it slips, the issue usually lies with alignment. The promise was clear, but the body did not follow through.

This is where layout discipline pays off. When the content flows predictably and calls to action are placed with intent, the alignment improves. Over time, this metric becomes less about optimization tricks and more about honesty between the email subject and substance.

Delivery Metrics That Shape The Results You See

After an email campaign goes out, successful delivery is never the headline, nor the star metric. It sits in the background, easily overlooked when open and click rates take center stage. That is also why it causes so much confusion.

When delivery slips, engagement numbers start telling a different story, and it is not always obvious why.

In my experience, a small change here can quietly affect everything else. The open rates decline, and clicks feel inconsistent. This leads teams to panic. They start adjusting content or design, when the real issue had already happened long before the email ever reached the inbox.

Delivery rate vs. inbox placement

Delivery rate sounds reassuring. If the message did not bounce, it feels like a win. Many people take it to mean visibility for their email, which is a grave mistake. You see, successful delivery does not guarantee your email gets seen. An email can be delivered and still land somewhere no one checks.

In real campaigns, this gap creates false conclusions. A drop in engagement gets blamed on subject lines or timing, when inbox placement is the real culprit.

When messages stop showing up where readers expect them, behavior changes fast. Even strong content struggles when it is buried away where the reader won’t look.

Consistency plays a role here, too. If teams send out emails with changed layouts or structures, it can trigger filters. On the other hand, teams that keep templates stable and predictable often see fewer surprises over time. This makes maintaining a consistent build process more important than it seems.

Bounce rate is an early warning sign, not just a cleanup task

Bounce rate is a highly underrated metric. It is one of the earliest signals that something might be off with your emails. When bounce rates creep upward, even if very slightly, the engagement metrics start losing context.

This is because it indicates that fewer real people are seeing the message, which drags everything else down.

When bounce rates rise, a few warning signs tend to show up together.

- Engagement drops across multiple campaigns without changes in content

- Performance varies wildly between similar sends

- Small list segments behave very differently from like the rest

Delivery metrics never really stand out. They whisper, and the sooner you listen, the less time you spend fixing the wrong problems later.

Revenue Metrics Connect Email Performance to Business Outcomes

When revenue metrics become the topic of discussion, the tone shifts. This is because it lies outside the safe zone, so to speak.

It’s wholly different from other engagement metrics, like open and click rate. Revenue feels different, lands heavier. Money either showed up or it didn’t, and that finality makes people stop arguing.

I always suggest taking these metrics seriously, because they influence what happens next. Budgets. Send frequency. Which ideas survive.

Sure, a strong number looks nice on paper (or, in this case, your computer screen), but with no proper explanation, it is actually fragile. Instead, a weaker result that clearly points to what worked, or what broke, tends to be far more useful.

Conversion rate beyond last-click thinking

Teams often treat conversion rates like the final verdict on an email. But real behavior is messier.

Some recipients read, think, close the tab, and come back later through search. Others click, get distracted, and only convert after a reminder or a notification. By that time, several days could already pass by. Yes, the email played a role, but just not the final one.

Conversion rates usually move in small increments that matter. A tenth of a percent may seem infinitesimal, but it can still signal something meaningful when everything else stays steady.

When conversion rates go down, the first reaction is often to rewrite a copy or change the offer. While it can work at times, most of the time the problem lies elsewhere.

Where, you ask? Timing. Page load.

A landing page that doesn’t match what the message promised.

Attribution doesn’t help. Most teams know last-click reporting is incomplete, but it’s simple, and they still turn to it. This is why my advice is to treat conversion rate as directional instead of definitive. It leads to calmer decisions and fewer overcorrections.

Revenue per email and averages

Revenue per email feels comforting. After all, it’s just one number. It makes comparisons easy and keeps charts clean.

Even though it looks ideal, the problem is what gets smoothed out.

On many occasions, I have found that a small slice of the list carries most of the revenue, while the rest barely responds.

The average hides that imbalance. It also hides volatility that is caused by sudden or significant design shifts that the reader doesn’t really need.

When you pair this metric with consistency, it instantly becomes more useful. This is because revenue per email gets easier to interpret when layouts, structure, and calls to action remain familiar.

In contrast, sudden design experiments can change the numbers without really saying much about actual intent.

That’s why stable template workflows matter more than they get credit for.

The following table shows a simple way to separate what these metrics actually tell you.

| Metric | What it shows | What it helps you decide |

| Conversion rate | Response after engagement | Whether the message and it’s destination align |

| Revenue per email | Average value per email sent | Which audiences or formats pull real weight |

| Assisted revenue | Influence across channels | How email supports the broader funnel |

Revenue metrics should do more than just close reports. They should help you identify and shape what you test next, what you repeat, and what you choose to ignore.

When used that way, they start earning their place and stop feeling intimidating.

Email Marketing Metrics You Should Track When Improving Design and Templates

Design is one of those areas where numbers feel distant until something goes wrong.

A small layout tweak seems harmless. A button shifts. A section disappears. Then clicks soften, or spike in strange places. That’s when design stops being about taste and starts leaving fingerprints in the data.

The tricky part is that design changes rarely announce themselves. The numbers react quietly. You only notice if you’re paying attention.

Interaction clues hidden inside clicks and scroll behavior

Clicks aren’t just signals of interest. They’re clues about movement.

When links near the top get attention while everything below gets ignored, it’s often a pacing issue, not a content problem. The same offer can perform very differently depending on where it sits and how the eye is guided toward it.

Scroll behavior tells a similar story. If engagement consistently drops at the same point across multiple sends, that section deserves scrutiny. Dense copy. An awkward visual break. A call to action that feels like it arrived too early or too late.

Sometimes the fix isn’t rewriting anything. It’s spacing. Clearer section breaks. Letting the message breathe.

These patterns only become visible when layouts stay consistent enough to compare. When every email looks completely different, cause and effect get blurry fast.

Why template consistency influences performance over time

Consistency often gets mistaken for playing it safe. In reality, it creates speed.

When readers recognize a structure, they move through it with less friction. They know where to look. They hesitate less. That familiarity shows up in engagement long before anyone says it out loud.

This is where practical tools matter. Many teams rely on platforms like MailEditor to keep core layouts steady while adjusting content inside them. Not because it’s flashy, but because it keeps performance readable.

When templates stay familiar, metric shifts mean something. You can trace changes back to a headline, an image, or a call to action instead of guessing. Over time, that discipline turns design into a process that quietly compounds.

Choosing the Right Metrics Based on What Your Campaign Is Actually Trying to Do

Every team eventually hits a point where the numbers pile up and the conclusions thin out. That’s usually not a data problem. It’s a question problem.

Instead of asking which metrics matter in general, it helps to ask what this specific campaign is meant to do.

Goals change the lens. A re-engagement email shouldn’t be judged like a revenue push. A product announcement shouldn’t be measured the same way as a learning experiment. When the goal shifts, the signals worth paying attention to shift with it.

This isn’t about rigid frameworks. It’s more about honesty before the send goes out. What decision are you hoping this data will help you make next time?

In practice, experienced marketers tend to think about it like this:

- When the goal is warming up a quiet list, early engagement and signs of renewed attention matter most

- When the goal is driving action, depth of interaction and follow-through outweigh surface response

- When the goal is learning, consistency and comparability across sends become the priority

- When the goal is revenue, metrics tied directly to buying behavior carry the most weight

Problems start when every campaign gets judged by the same handful of numbers, regardless of intent. Reports get longer. Insights get thinner.

Choosing metrics with purpose doesn’t simplify reporting. It sharpens it. And that clarity is what turns numbers into lessons instead of noise.

When goals shift, meaning shifts. Metrics only tell the truth when they’re chosen in service of the decision you want to make next.

Conclusion

The longer you work with email, the more you realize that metrics aren’t there to judge you. They’re there to help you think.

When numbers start feeling stressful, it’s often because they’re being treated as answers instead of prompts. The real insights usually show up after you sit with the data for a while. You notice what barely moved. What shifted slowly. What stayed stubbornly the same.

That’s when patterns start connecting back to real choices made weeks earlier. A layout decision. A timing change. A habit that quietly stuck.

Improvement rarely comes from dramatic overhauls. It comes from repeatable behaviors. Keeping structures familiar enough to compare.

Watching how small changes ripple through engagement. Reviewing results with curiosity instead of defensiveness. Using tools like Maileditor to maintain that consistency, not for polish, but so the numbers stay readable over time.

Those habits don’t appear in dashboards, but they shape everything the dashboards show.

Over time, the email marketing metrics you should track stop feeling abstract. They become part of how you plan and refine your work.

Not because you tracked more numbers, but because you learned which ones deserved your attention.

And once that happens, email stops feeling unpredictable, even on days when the results aren’t what you hoped for.

Written by

A full-stack digital marketer and passionate blogger with more than seven years of hands-on experience helping brands grow, rank, and thrive online.

All postsPopular Blogs

Email Design2 mins to read

Top Email Template Builders & HTML Email Editors for 2026

Compare the best email template builders and HTML editors to design professional, responsive campaigns without coding.

Email Tips7 mins to read

Best Drag and Drop Email Editors in 2026

Discover the best drag and drop email editors in 2026. Compare top tools, features, templates, pricing, and automation to build stunning emails fast.

Marketing7 mins to read

4 Best Unlayer Alternatives to Design Emails

Looking for Unlayer alternatives? Explore the 4 best email design tools to create beautiful, responsive emails faster and easier.

Email Design1 min to read

How to Create an HTML Email Template in Mailtrap

Follow this step‑by‑step guide to creating a responsive HTML email template in Mailtrap. Design, code, and customize your template with confidence.

Email Design1 min to read

Step-by-Step Guide: How to Create an Email Template in MailerLite

Follow this step-by-step guide to creating beautiful, high-performing email templates in MailerLite — from choosing a layout to customizing content for profit.